Saturday, March 28, 2026

Artwork of the Day

A circle knows no argument, only curve— the triangle insists on edges, sharp and sure. Between them, color speaks what words won't serve: that form itself is feeling, clean and pure. The grid holds chaos gently, nothing more.

Faces of Grit

Rani Abbakka Chowta

The Queen Who Made the Portuguese Flee

Anatomy of the .claude/ Folder Hits 394 Points on Hacker News

A deep dive into the internal structure of Claude Code's .claude/ directory has become the most upvoted HN story of the day with 394 points and 194 comments. The post dissects how Claude Code organizes its project context, settings, and memory files — revealing the surprisingly simple file-based architecture behind one of the most popular AI coding tools. The discussion has drawn comparisons to how other AI tools manage state and configuration.

Make macOS Consistently Bad (Unironically) Sparks 230-Comment Debate

A blog post arguing that macOS should commit to being consistently opinionated rather than mixing paradigms has generated massive discussion on HN with 325 points. The author contends that Apple's worst UX decisions come not from bold choices but from half-measures — trying to be both traditional desktop and touch-friendly, both professional and consumer. The 230-comment thread reveals deep frustration from long-time Mac users about the platform's identity crisis.

SoftBank's $40B Loan Points to a 2026 OpenAI IPO

Wall Street giants JPMorgan and Goldman Sachs are extending a 12-month unsecured loan to SoftBank, and TechCrunch reports the deal structure strongly signals OpenAI will go public this year. The loan would effectively enable SoftBank to double down on its OpenAI position ahead of a potential listing. The deal would come on top of SoftBank's existing $40B commitment to the Stargate AI infrastructure project, making it the largest single-company bet in tech investment history.

Federal Judge Blocks Trump's Ban on Anthropic AI Models

A federal judge in San Francisco has temporarily blocked President Trump's executive order banning federal agencies from using Anthropic's Claude models, calling the Pentagon's security risk classification 'Orwellian.' The dispute traces back to a failed $200 million contract where Anthropic insisted on guarantees against autonomous weapons use. Judge Rita Lin ruled that punishing a company for expressing disagreement with the government constitutes illegal First Amendment retaliation.

Physical Intelligence Reportedly Raising Another $1B at $11.2B Valuation

Robotics AI startup Physical Intelligence is in talks to raise $1 billion in new funding, which would double its $5.6 billion valuation in just four months. Founders Fund, Thrive Capital, and Lux Capital are reportedly leading the round. The company is building foundation models for robotic manipulation — training general-purpose AI that can control any robot body to perform physical tasks in the real world.

OpenAI Officially Takes Codex Beyond Coding with New Plugins Feature

OpenAI has launched a plugins system for Codex, its AI coding agent, allowing it to integrate with external tools and services beyond pure code generation. Ars Technica reports this closes some of the gap with Claude Code, which has offered tool-calling and system integration from the start. The move signals OpenAI's intent to transform Codex from a coding assistant into a general-purpose agent platform.

Fear and Denial in Silicon Valley Over Social Media Addiction Trial

A BBC investigation into the ongoing social media addiction trial has drawn 89 points and over 100 comments on HN. The piece documents how tech executives are responding with a mixture of deflection and anxiety as the trial exposes internal documents showing companies knowingly designed addictive features targeting minors. Multiple former employees have testified that engagement metrics were prioritized over user wellbeing despite internal research flagging the harms.

Cursor's Real-Time Reinforcement Learning for Composer

Cursor has published a detailed technical blog post on how they use real-time reinforcement learning to improve their AI code editor's Composer feature. Rather than relying solely on offline training, the system learns from actual user interactions — tracking which suggestions get accepted, modified, or rejected — and adjusts its behavior in real time. The approach represents a shift from the standard 'train once, deploy forever' paradigm toward continuously adaptive AI tools that get better the more you use them.

Cohere Releases Open Source Speech Recognition Model Topping Benchmarks

Canadian AI company Cohere has released 'Transcribe,' a 2 billion parameter open-source model for automatic speech recognition that claims the top spot on Hugging Face's Open ASR Leaderboard with a 5.42% word error rate. The model beats OpenAI's Whisper Large v3, ElevenLabs Scribe v2, and Qwen3-ASR in both accuracy and throughput. Available under Apache 2.0, it supports 14 languages and will be integrated into Cohere's enterprise AI agent platform North.

Simon Willison: Vibe Coding SwiftUI Apps Is a Lot of Fun

Simon Willison documents his experience vibe-coding macOS apps using Claude Opus 4.6 and GPT-5.4 on his new 128GB M5 MacBook Pro. He built two menu bar utilities — Bandwidther for network monitoring and Gpuer for GPU stats — without opening Xcode, since full SwiftUI apps can fit in a single text file. The post serves as a practical case study in how capable current AI models are at generating production-quality native code, while also revealing the M5 chip's impressive ability to run large local LLMs.

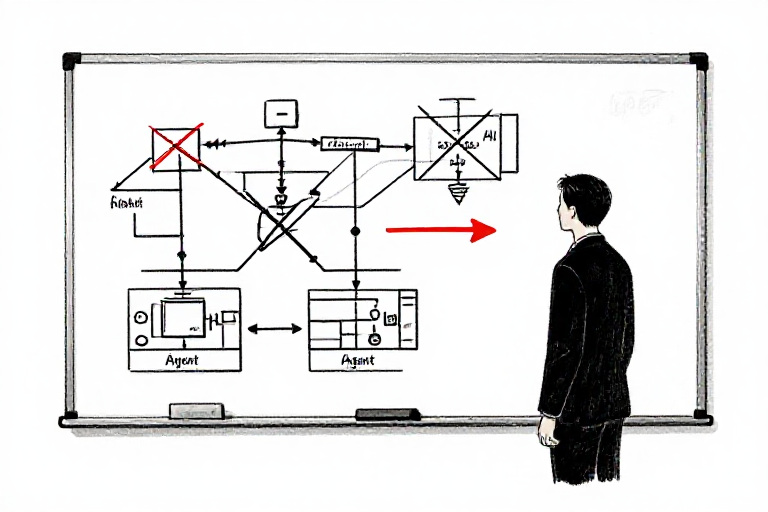

90% of AI agent projects I get hired for don't need agents at all

A freelance AI consultant reveals that the vast majority of clients who come to them wanting 'AI agents' actually need simple automations. A founder spent a month researching CrewAI and LangChain for lead qualification — what he actually needed was a script checking 3 email fields against ICP criteria. Built in 4 days, saves 2 hours daily, and the client calls it his 'AI agent.' The post argues that most 'agent' projects are really one API call, a few conditionals, and some formatting — and that the real skill is knowing when not to over-engineer.

The biggest red flag is when a client says 'we need an AI agent' instead of describing the problem they want solved. The solution is almost never what they think it is.

— Candid_Difficulty23612 pts

Charging $3k for a script is fine if it solves a $3k problem. The value is in the diagnosis, not the implementation.

— Deep_Ad19598 pts

This is the consulting sweet spot — you're selling the judgment of what NOT to build as much as what you do build.

— siegevjorn6 pts

The Claude Code skills actually worth installing right now (March 2026)

A curated guide to the Claude Code skills ecosystem, now numbering in the thousands since launching in October 2025. The author explains that Claude scans all installed skills at startup using only about 100 tokens per skill, loading full instructions only when relevant. Top picks include 1-frontend-design (prevents the default Inter/purple gradient look), along with skills for testing, deployment, and code review. The key insight: you can have dozens installed without bloating context on unrelated tasks.

The frontend-design skill alone is worth it. Before installing it, every Claude-generated page looked identical — same font, same gradient, same card layout.

— Tatrions15 pts

Good list. I'd add that the testing skills are massively underrated — they completely changed how Claude approaches test generation.

— ninadpathak11 pts

The 100-token scan at startup is the key detail most people miss. Skills are basically free until you actually use them.

— Additional_Jello46577 pts

Is the 'Multi-Agent' hype hitting a reality wall in production?

Three months into building a document automation pipeline with AutoGen, an engineer is regretting the multi-agent architecture. Specialized agents seemed like a natural fit for compliance checks, but in production, p95 latency sits above 20 seconds, API costs have jumped 10x, and debugging which agent introduced bad logic is a nightmare. The thread debates whether multi-agent systems are fundamentally flawed for production or whether the tooling just hasn't caught up.

Multi-agent is the microservices of AI. Sounds great in theory, turns into a debugging nightmare at scale. Start with a single well-prompted model and only split when you hit a clear bottleneck.

— WeUsedToBeACountry18 pts

We went through the same thing. Ended up replacing 4 agents with one large prompt and a routing layer. Latency dropped 80%, costs dropped 90%.

— rukola997 pts

The honest answer is that for 95% of use cases, a single model with good tools and context management beats multi-agent orchestration.

— Deep_Ad19595 pts

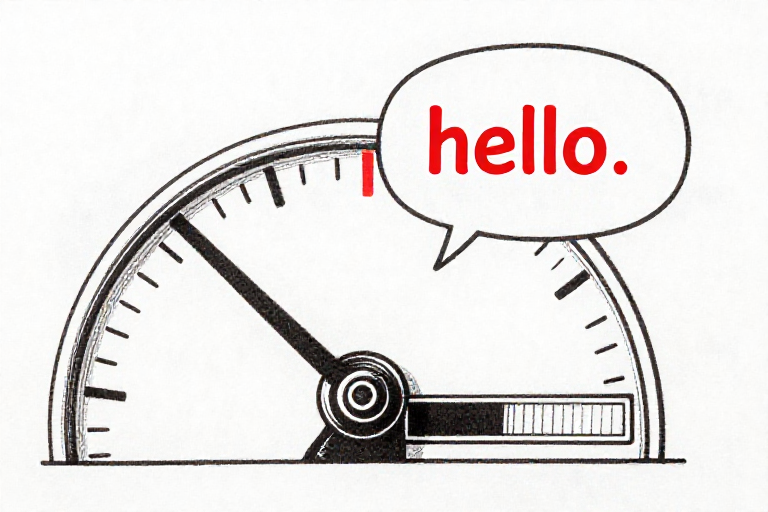

It costs you around 2% session usage to say hello to Claude

The most explosive post on r/ClaudeCode today, with over 800 upvotes and 400 comments. The author demonstrates that a single simple greeting consumes roughly 2% of a Max plan session — and reports shifting their entire workload to Codex after seeing consistently excessive token consumption. The thread has become a lightning rod for frustration about Claude Code's pricing model, with hundreds of users reporting similar experiences of simple prompts burning through disproportionate amounts of their quota.

I tracked my usage for a week. Simple file reads were eating 3-4% per prompt. Multi-file edits were hitting 15-20%. At $100/month, that's roughly $2 per hello and $15 per real task.

— moader138 pts

The problem isn't that it costs tokens. The problem is there's no transparency about WHY it costs that many tokens. Show us the system prompt. Show us the context window usage. Stop hiding behind a percentage bar.

— Silent-Horse7364112 pts

Switched to Codex last week. $20/month, more usage than Claude's $100 plan. The quality gap has narrowed significantly since GPT-5.3.

— Synekal64 pts

Maybe cancel that government contract and free up some servers for the rest of us

A pointed post with 626 upvotes referencing Anthropic's government dealings while users face capacity constraints. The sentiment reflects growing frustration that Anthropic is prioritizing enterprise and government contracts while consumer Max plan subscribers experience throttling, rate limits, and degraded performance during peak hours. The 114-comment thread has become a broader discussion about whether Anthropic's consumer product is becoming a second-class citizen to its enterprise business.

Paying $200/month for Max 20x and getting rate limited during business hours is genuinely insulting. If you can't serve the customers you already have, stop selling to new ones.

— Important_Winner_47788 pts

The irony of Anthropic positioning itself as the 'ethical' AI company while charging premium prices for a degraded service isn't lost on anyone.

— JollyQuiscalus52 pts

Enterprise contracts are where the real money is. Consumer subscriptions are marketing. We are the marketing.

— nonlogin44 pts

PSA: If you don't opt out by Apr 24, GitHub will train on your private repos

A warning post alerting Claude Code users that GitHub has quietly opted all users into allowing their private repositories to be used for AI training. The deadline to opt out is April 24, 2026, and the setting is buried in GitHub's Copilot features page. The thread expresses frustration at the opt-out rather than opt-in approach, with several commenters noting they only discovered this through the Reddit post rather than any official communication from GitHub.

Default opt-in for training on private repos is genuinely hostile. These are private for a reason — proprietary code, client projects, security-sensitive infrastructure.

— LookAnOwl35 pts

Link to opt out: github.com/settings/copilot/features. Do it now before you forget.

— pagenotdisplayed9 pts

Between this and the Microsoft recall stuff, the 'just trust us with your data' era of big tech is getting exhausting.

— gringogidget8 pts

Co-founder left after 14 months. No vesting agreement. He walked with 40% equity and zero obligation.

A founder shares the painful consequences of splitting equity on a handshake. His co-founder brought in the first 8 customers and ran sales for 4 months before taking a $190K job elsewhere — walking away with 40% equity in a company now doing $8K MRR. With no vesting schedule, no cliff, and no operating agreement, the remaining founder has no legal leverage. The co-founder wants $80K for a buyout. The 129-comment thread is a masterclass in why vesting agreements are non-negotiable, with lawyers and experienced founders weighing in.

Pay the $80K. I know it feels insane, but you're buying back 40% of something that could be worth $1M+ within a year. The alternative is having a dead-weight equity holder forever. Every investor will ask about it.

— egrogre168 pts

The $80K is actually cheap. At $8K MRR growing, a 40% stake could be valued at $300K+ in a proper valuation. He either doesn't know what he has or just wants a clean exit.

— Ok-Passenger971153 pts

Vesting. Schedule. Period. 4-year vest, 1-year cliff. For every co-founder, every time. This is startup law 101 and it exists specifically for this exact scenario.

— stephen5628746 pts

We have a customer who's referred 11 other paying customers. We've never asked them to.

A SaaS founder discovers their most valuable customer isn't their highest-paying one — it's someone on a $79/month plan who has organically referred 11 paying customers over two years, generating roughly $13K in annual revenue through pure word of mouth. The founder wrestles with how to acknowledge this without making it transactional. The thread debates whether formalizing the relationship with a referral program would destroy the authenticity that makes it work.

Send them a handwritten note and a gift. Not a referral code. Something personal. The moment you systematize it, you lose the magic.

— competetowin11 pts

This is product-market fit in its purest form. When customers sell for you without being asked, you've built something people genuinely can't shut up about.

— Anantha_datta8 pts

Invite them to be an advisor. Give them equity in exchange for what they're already naturally doing. It formalizes the relationship without cheapening it.

— BallHuman14643 pts

What software are you still happily paying for because it actually saves time?

A practical thread cutting through the noise of SaaS tool fatigue. The original poster is cleaning up their stack and wants to know which tools have genuinely earned their place over time — not the ones that looked great in demos but became chores. The 35 responses reveal a clear pattern: the tools that survive stack cleanups are the ones that reduce back-and-forth communication, eliminate small repetitive tasks, and keep operations clean without requiring constant maintenance.

Linear for project management. It's the only tool I've used where the team actually keeps it updated without being nagged. That alone makes it worth 10x the price.

— alwaysvalue9 pts

Notion for docs, Loom for async communication, and Stripe for payments. Everything else gets evaluated every quarter.

— bccorb10003 pts

The test is simple: if we stopped paying tomorrow, would anyone on the team notice within 24 hours? If yes, it stays.

— Low_Pea_9513 pts

Google is quietly killing small businesses. And nobody's talking about it.

A marketer highlights the accelerating squeeze on small business visibility in Google search. Last year, ads appeared in 1% of local searches — now it's 22%. The first five results are ads, followed by AI summaries, with actual organic websites buried below the fold. Years of SEO investment by small businesses is being rendered worthless almost overnight. The 53-comment thread debates whether the future is paid search, owned channels, or abandoning Google entirely.

It's not quiet. Everyone in the industry knows. The problem is that there's no viable alternative at scale. Google owns the demand layer and they know it.

— 7_Eagles27 pts

The 1% to 22% ad placement jump in local search is the stat that should scare every small business owner. That's not gradual change — that's a platform deciding to monetize a channel it previously left alone.

— ppcwithyrv21 pts

My agency's clients have seen organic traffic drop 30-40% year over year despite rankings staying stable. The positions haven't changed — the clicks just aren't reaching them.

— Mike_Scalpers13 pts

Reddit is now the most cited source by AI platforms and most marketers are ignoring it

A Semrush analysis of over 150,000 LLM citations found Reddit leads all sources with 40.1% citation frequency, ahead of Wikipedia at 26.3%. Both Google and OpenAI have signed data licensing deals with Reddit worth over $200 million combined. Reddit appears in 97.5% of Google product review queries, and Perplexity draws 47% of its responses from Reddit discussions. For marketers, the implication is clear: if you want your brand to appear in AI-generated answers, you need to be present in Reddit threads, not just traditional SEO pages.

This makes sense. AI models trust conversational, first-person accounts over marketing copy. Reddit is the largest corpus of 'real people talking about real experiences' on the internet.

— ExternalPop92976 pts

And this is exactly why Reddit will eventually become as astroturfed as every other platform marketers discover. The authenticity that makes it valuable is the first thing that dies when brands show up.

— cynicalmarketer2 pts

Already seeing agencies offering 'Reddit SEO' packages. The gold rush has begun.

— SecretaryNo41962 pts

I feel stuck in my digital marketing career

A 15-year digital marketing veteran working as a Director in New York shares their frustration at plateauing at $150K when they expected to reach $250-300K by this point. They're considering switching to data engineering for better pay with potentially less responsibility. The 32-comment thread reveals a common ceiling in marketing careers and debates whether the path to higher compensation lies in specialization, leadership, or pivoting to adjacent technical fields.

The $150K ceiling in marketing is real unless you go VP+ at a large company or start your own agency. Data engineering pays more because the supply-demand ratio is different, but 'less responsibility' is a myth.

— CuriousOperator0128 pts

15 years of marketing experience plus data skills is actually a killer combination. Don't think of it as 'switching' — think of it as adding a force multiplier to what you already know.

— Ecstatic_Menu_69775 pts

The real money in marketing is in revenue attribution and proving ROI. That's essentially data engineering applied to marketing. You might not need to switch — just rebrand what you do.

— SunTayMontayTuesTay4 pts

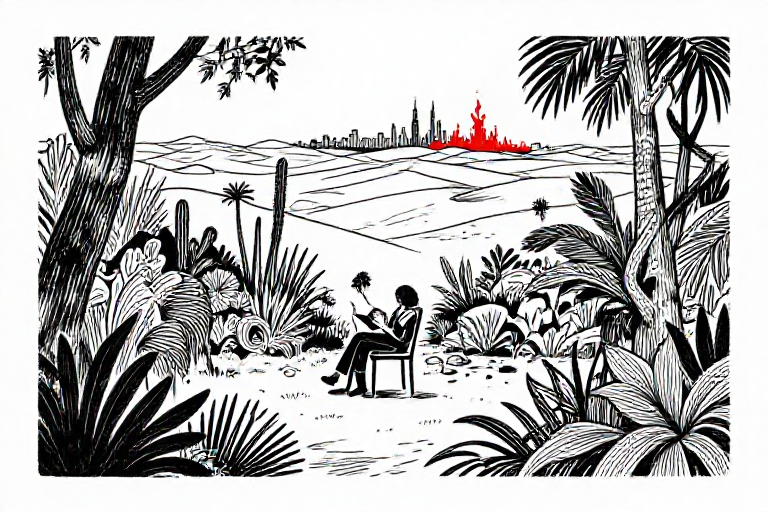

Arendt Speaks of Oases (In Praise of Oases)

An excerpt from a long-form essay on Hannah Arendt's concept of 'oases' — spaces of retreat within the political desert where genuine thinking and human connection remain possible. The essay explores Arendt's argument that imagination, not empathy, is the key to understanding others. By thinking ourselves into others' shoes through imaginative detachment rather than emotional absorption, we maintain the dual commitment to attachment and detachment that authentic political life requires. A slow, meditative read that rewards patience.

The distinction between imagination and empathy is underappreciated. Empathy risks collapsing the distance between self and other. Imagination preserves it while still allowing genuine understanding.

— Dry_Instruction_37192 pts

What struck me most was the idea that spending time alone is not withdrawal from politics but preparation for it. The oasis is where you build the capacity for judgment.

— Potential_Being_72261 pts

On Morality (a bit too much)

A Substack essay tackling the foundations of morality — the author acknowledges the topic has been well-trodden but wanted to articulate their own framework. The comments offer constructive philosophical criticism rather than dismissal, engaging with the author's arguments on their merits. A modest but earnest contribution to one of philosophy's most enduring questions.

The willingness to acknowledge that these arguments aren't novel while still working through them carefully is itself valuable. Philosophy isn't only about originality.

— Dry_Instruction_37191 pts

I think the essay would benefit from engaging more directly with contemporary moral anti-realism rather than treating the debate as primarily historical.

— Life_Quit_57761 pts

Panpsychism for Functionalists: How Tables Might Be Conscious

A Substack piece making the provocative argument that if you accept functionalism about consciousness — the view that mental states are defined by their functional roles rather than physical composition — you may be committed to something like panpsychism. If consciousness is about information processing patterns, and those patterns exist at many scales, then even simple objects could have rudimentary experience. The 14-comment thread generates more heat than light, but includes substantive pushback from philosophers of mind.

The standard functionalist reply is that not all information processing counts — there's a threshold of complexity and integration below which there's no functional organization rich enough to support consciousness. The hard question is where to draw that line.

— simon_hibbs8 pts

This is the combination problem dressed in functionalist clothing. Even if tables process information in some minimal sense, explaining how that adds up to unified experience remains as hard as ever.

— OGLikeablefellow5 pts

Twitch Roulette

Find live streamers who need views the most

Velxio 2.0

Emulate Arduino, ESP32, and Raspberry Pi 3 in the browser

Datasette Showboat

Incremental publishing for Datasette visualizations

DeepMind's New AI Just Changed Science Forever

Karoly Zsolnai-Feher covers a new DeepMind paper that pushes the boundaries of AI-driven scientific discovery. The video examines how the model generates novel research hypotheses and validates them against existing literature, representing a potential step change in how AI assists the scientific process.

Two AI Models Set to 'Stir Government Urgency' — But Will This Challenge Undo Them?

AI Explained examines leaked reports about OpenAI's upcoming 'Spud' model alongside Anthropic's rumored Claude Mythos — both said to represent significant capability jumps. The video contextualizes these leaks against the newly-launched ARC-AGI-3 benchmark and its implications for measuring progress toward general intelligence.

The Algorithm That Made Me Cry

Two Minute Papers explores an emotionally resonant AI research paper — likely involving creative or generative capabilities that produced unexpectedly moving results. A more contemplative episode that examines the intersection of technical achievement and human emotional response.