Tuesday, March 25, 2026

Artwork of the Day

The water writes in silver script on pages pressed from ancient sand, each channel branching, unpredict-- a fractal language, unplanned, that speaks of leaving, and of land.

Faces of Grit

Tete-Michel Kpomassie

The African who dreamed of Greenland

Apple launches Apple Business, an all-in-one platform for companies of all sizes

Apple announced Apple Business, a new integrated platform combining invoicing, payroll, inventory, scheduling, and customer management into a single ecosystem tied to Apple hardware. The launch marks Apple's most aggressive move into the enterprise software space since the original partnership with IBM. Hacker News lit up with 538 points and over 320 comments, with many noting it could threaten Shopify, Square, and Toast simultaneously. The platform leverages Apple Pay, Apple Wallet, and on-device AI for real-time business analytics. Pricing starts free for sole proprietors and scales to enterprise tiers. Critics questioned whether Apple's historically consumer-focused design philosophy can survive the messy realities of B2B workflows.

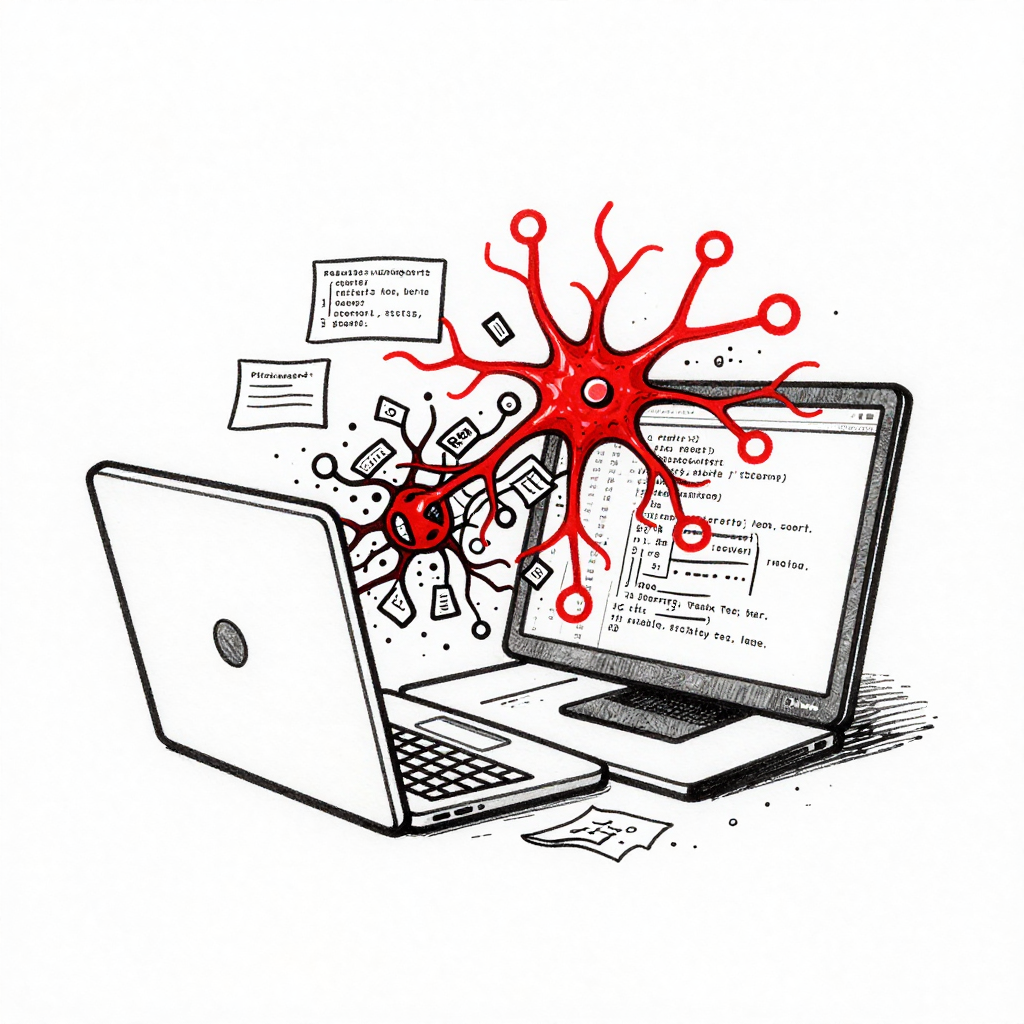

LiteLLM supply chain attack injects malware into AI proxy used across thousands of deployments

The widely used open-source AI proxy LiteLLM was compromised on PyPI, with versions 1.82.7 and 1.82.8 containing malware that steals SSH keys, cloud credentials, database passwords, and Kubernetes configurations. Security researcher Callum McMahon discovered the tampering after the package crashed inside the code editor Cursor, finding no matching release in the official GitHub repository. The malware spreads across Kubernetes clusters and installs permanent backdoors. Nvidia AI Director Jim Fan called it 'pure nightmare fuel,' warning that AI agents could be manipulated through infected files. The incident racked up 533 points on Hacker News with nearly 400 comments debating the fragility of the Python dependency chain. Anyone running affected versions is urged to rotate all credentials immediately.

OpenAI shuts down Sora as Disney backs out of billion-dollar investment

OpenAI officially pulled the plug on Sora, its AI video generation platform, after Disney walked away from a reported billion-dollar investment deal. Despite the underlying Sora 2 model being technically impressive, there was no sustained interest in an AI-only social video feed, and Hollywood studios ultimately declined to integrate it into production pipelines. The shutdown comes amid broader questions about OpenAI's strategy of building flashy demos versus practical developer tools. On Reddit's r/AI_Agents, the news sparked debate about whether Anthropic's focus on shipping useful infrastructure like Claude Code and MCP forced OpenAI's hand. TechCrunch noted the irony that the one industry most expected to embrace AI video saw the product up close and said no. The Hacker News thread hit 453 points with commenters calling it a 'verdict on vibes versus utility.'

Arm unveils AGI CPU architecture designed for artificial general intelligence workloads

Arm announced a new CPU architecture specifically designed for AGI workloads, marking a major strategic pivot beyond mobile and embedded chips. The AGI CPU integrates specialized instructions for transformer inference, continuous learning, and multi-agent coordination directly into the silicon. With 288 points and 229 comments on Hacker News, the announcement generated intense debate about whether hardware-level AGI optimization is premature or prescient. The architecture targets data center deployments where current GPU-centric approaches hit memory bandwidth bottlenecks. Arm claims 4x better inference-per-watt compared to current solutions for sustained multi-step reasoning tasks. Industry analysts noted the move positions Arm against both Nvidia's GPU dominance and Intel's Gaudi accelerators.

Meta hit with $375 million jury verdict in first-ever child safety trial

A New Mexico jury found that Meta willfully violated state law by misleading users about the safety of its products and engaging in unconscionable trade practices, awarding $375 million in penalties. The verdict marks the first jury decision of its kind against Meta over harm to young people, with the maximum penalty of $5,000 applied across 37,500 violations on two counts. TechCrunch emphasized that the dollar amount matters less than the legal precedent, as dozens of similar state lawsuits are watching this case closely. Meta's defense that it already invests heavily in safety tools failed to persuade the jury. Legal experts predict this will accelerate both state-level legislation and federal action on platform accountability. The rest of the country is now watching whether this becomes the template for holding tech companies liable for design choices that harm minors.

Kleiner Perkins raises $3.5 billion to go all-in on AI

Legendary venture firm Kleiner Perkins closed $3.5 billion in new funds split between $1 billion for early-stage AI startups and $2.5 billion for late-stage growth businesses. The raise signals continued institutional confidence in AI despite growing skepticism about valuations in the broader market. Kleiner Perkins, which backed Google, Amazon, and Genentech in earlier eras, is betting that the next wave of AI value creation will come from vertical applications rather than foundation model companies. The growth fund will target companies approaching profitability that need capital to scale operations, not research. This is one of the largest VC fundraises of 2026 and positions KP alongside a16z and Sequoia in the AI arms race. Critics note that the sheer volume of capital chasing AI startups may itself be creating bubble dynamics.

Claude Code ships Auto Dream for memory consolidation and Auto Mode for permission safety

Anthropic quietly shipped two major Claude Code features in one day. Auto Dream mimics REM sleep to consolidate the agent's memory files, reviewing past session transcripts, pruning stale or contradictory notes, and organizing what remains into indexed, date-stamped records. The feature addresses a real pain point where Auto Memory files became bloated and degraded agent performance. Separately, Auto Mode introduces a new permissions system where a Sonnet 4.6 classifier reviews every action before execution, blocking anything that escalates beyond task scope or appears driven by hostile content. Simon Willison published a detailed analysis of the default filter rules, noting the thoughtful handling of supply-chain risks in package installation. The Auto Dream post hit 1,652 upvotes on r/ClaudeCode, making it the day's biggest post by far.

Simon Willison: Inside Claude Code's Auto Mode permission safeguards

Simon Willison published a detailed technical analysis of Claude Code's new Auto Mode, which replaces the blunt '--dangerously-skip-permissions' flag with a nuanced permission classifier running on Sonnet 4.6. The system reviews every action against an extensive ruleset: allowing local file operations and read-only API calls, soft-denying force pushes and direct pushes to main, and hard-blocking irreversible destruction, credential exfiltration, and social engineering attempts. Willison highlighted the supply-chain awareness built into the defaults, where installing packages already in a project's manifest is allowed but agent-chosen package names are blocked due to typosquat risk. The system even detects when read-only operations are being used to scout for a blocked action. This represents a significant step toward making autonomous coding agents safe enough for real-world use without constant human supervision.

Final analysis of the 2025 Iberian blackout reveals how policy failures left Spain at risk

ENTSO-e released its final detailed report on the April 2025 Iberian Peninsula blackout that left Spain and Portugal entirely without power. The expert committee had access to sub-second precision logs from major grid hardware, interchange data with France and Morocco, and even performance records from rooftop solar inverter manufacturers. The report confirms that a combination of grid-level voltage oscillations and premature hardware disconnections cascaded into total failure, largely consistent with preliminary findings but with far more detail on how specific policy choices allowed too much equipment to disconnect right at the edge of normal operating conditions. The analysis offers a clear roadmap for preventing recurrence, focusing on updating the thresholds at which renewable hardware is allowed to drop offline. This is essential reading for anyone following the energy transition, as it demonstrates that grid stability in a renewables-heavy system depends as much on regulation as on engineering.

This scientist rewarmed and studied pieces of his friend's cryopreserved brain

MIT Technology Review reports on a scientist who received permission to study portions of a friend's cryopreserved brain, which had been stored at minus 146 degrees Celsius in Arizona for over a decade. The research represents one of the first rigorous scientific examinations of what actually happens to neural tissue during long-term cryopreservation, moving the conversation beyond theoretical debates and into empirical territory. The brain belonged to L. Stephen Coles, and the study involved carefully rewarming tissue samples to examine cellular structure preservation. The findings have implications not just for the controversial cryonics community but for the broader field of organ transplantation, where understanding freezing damage at the cellular level could improve preservation techniques for donor organs. The piece navigates the ethical complexity of studying a deceased friend's tissue while maintaining scientific rigor.

A Harvard physics professor just used Claude AI to co-author a real frontier research paper in 2 weeks

Matthew Schwartz, a Harvard theoretical physics professor, ran an experiment: could he supervise Claude like a graduate student and produce a genuine, publishable physics paper using only text prompts? The result was a high-energy physics paper on the 'Sudakov shoulder in the C-parameter,' a brutally complex quantum field theory calculation, completed in two weeks. Claude went through 110 draft versions and exchanged over 51,000 messages. The paper is now on arXiv and physicists are reading it. Schwartz says it may be the most important paper he has ever written, not for the physics, but for demonstrating the method.

The professor's years of QFT expertise powered this. Claude crunched numbers but needed that human steer to spot the Sudakov shoulder. The real story credits human guidance over AI solo genius.

— ninadpathak54 pts

The undersold part is the verification layer. The professor caught when Claude fudged parameters to make plots look right. Without that human referee, you'd have a confident-looking paper that's subtly wrong. The bottleneck isn't capability, it's verification.

— Specialist-Heat-641414 pts

PhD student here using Claude in Excel for reactor modeling. What used to take 1-2 months now runs in days. My task shifted from technical grunt work to deciding context and exploring ideas.

— bekicotman37 pts

What AI agents have blown your mind away so far?

A discussion thread asking which AI agents have genuinely impressed people beyond simple automation. The responses reveal a shift in what 'mind-blowing' means: a year ago it was 'this thing can do a task,' now it is 'this thing knows when not to bother me.' Practical examples dominated, from engineering teams claiming they have not manually written code in three months using Windsurf Cascade on Claude Opus, to orchestrator systems that one-shot 200k-token applications from a 2,100-line PRD in under 90 minutes with zero errors.

Our engineering team uses Windsurf's Cascade on Claude Opus for almost everything. Engineers haven't written a line of code manually in 3 months. They've turned into product managers guiding the AI. Engineering output doubled easily.

— dewharmony0341 pts

Built an orchestrator with plan/implement/test stages and reviewer gates. It one-shotted a 200k token app from a 2100-line PRD. 64 steps, 1 hour 25 minutes, zero errors. Literally 100x programming.

— MR19338 pts

The ones that changed how I work are not the flashy ones. An agent that monitors a data source and only interrupts me when something matters. Runs all day, says nothing most of the time.

— Specialist-Heat-641412 pts

OpenAI killed Sora because Anthropic showed up with tools people actually use

OpenAI pulled the plug on Sora and Disney immediately backed out of a billion-dollar investment deal. The post argues that while OpenAI burned GPU clusters on a video toy nobody could monetize, Anthropic was shipping things developers actually use: Claude Code, computer use, MCP, tool use. The real punchline according to the author is that Hollywood, the one industry that should have embraced AI video, saw the product up close and said no. Comments were split between agreeing with the competitive framing and noting Sora's cost structure was simply unsustainable regardless of Anthropic.

Disney saw the product before they signed. OpenAI is just a company that got lucky with brand recognition early on, becoming the 'kleenex' of AI. Other corporations are starting to figure this out.

— _Cromwell_32 pts

OpenAI will be bought on the cheap by Microsoft and continue its demise before being reborn as clippy.ai

— dontbelieveawordof1t8 pts

Sora got shut down because video gen was crazy expensive and messy, while Anthropic stayed focused on practical dev tools. Claude Code is everywhere because it actually helps people ship.

— manjit-johal10 pts

Claude Code can now /dream

The biggest post on all of Reddit's AI communities today. Claude Code shipped Auto Dream, a feature that mimics REM sleep to consolidate the agent's memory system. Auto Memory, shipped in February, lets Claude take notes on your project as it works. The problem: by session 20, memory files bloated with noise, contradictions, and stale context, actually degrading performance. Auto Dream runs a background sub-agent that reviews all past session transcripts, identifies what is still relevant, prunes contradictions, consolidates into organized indexed files, and replaces vague references like 'today' with actual dates. The feature runs in four phases and processes even 900+ session histories.

OK well now we need /acid to handle all of its hallucinations.

— Tiny_Arugula_5648677 pts

These commands are getting crazier. We will have /shit to cleanup AI shit soon.

— narcosnarcos215 pts

Found Ray Amjad's YouTube breakdown of the feature. AutoDream runs in four phases: transcript scanning, relevance scoring, memory consolidation, and index generation. The key insight is it preserves project-specific context while aggressively pruning session-specific noise.

— FortuitousAdroit47 pts

Usage limit bug is measurable, widespread, and Anthropic's silence is unacceptable

A detailed post consolidating widespread reports of a dramatic drop in Claude Code usage limits. Following a 2x off-peak usage promotion, baseline limits appear to have crashed to 0.25x-0.5x normal levels instead of returning to 1x. Reports flooded in over 18 hours across the community. Users on the 2x promo reported it feeling like their old standard limits. The post calls out Anthropic's complete lack of communication, noting that paying users whose livelihoods depend on these tools deserve transparency about what their subscription actually buys.

This has shaken my trust in the platform. I suspect it was a load-balancing measure after growth and partial outages. But without communication, all we can do is speculate. I find it unacceptable that there's no transparency into what $200/month actually pays for.

— ImOnALampshade56 pts

The fact there is no response is wild. Maybe this is what the subscription looks like without being subsidized. Maybe that's why they're silent.

— lolu1339 pts

It seems a cultural trend with LLM companies to gaslight users. They are moving at such pace that customer service is way down their priorities list.

— Disastrous_Bed_902682 pts

Claude Code Limits Were Silently Reduced and It's MUCH Worse

A companion post to the usage limit crisis from a long-time Claude Code user making their first-ever forum post because 'the situation has gone too far.' The user reports hitting limits faster and more aggressively than ever before despite not using plugins, keeping Claude.md clean, and working on simple PHP and JavaScript. Multiple Max 20x plan users confirmed the issue, with one reporting their usage dashboard showed 41% but the CLI returned 'limit reached.' Another Max user who normally peaks at 30% hit 100% in one hour. The lack of transparency drew the most ire, with users noting that LLM companies have gotten away with unquantified 'usage limits' in a way no other industry could.

Everybody's noticing it today, except it's not a small reduction. It's like a hundredfold. It seems to be a bug more than anything.

— -becausereasons-158 pts

It feels like my Max plan was downgraded back to Pro plan usage.

— dcphaedrus63 pts

I can't think of an industry where 'usage limits' aren't actually quantified. Even cellphone data is denominated in gigabytes. Until LLM limits are quantified and audited, they can move the goalposts however they want.

— Dry-Magician141535 pts

You're not building a SaaS. You're avoiding getting a job and calling it entrepreneurship.

A developer who has built 30+ MVPs for founders says he can tell within five minutes of a call whether someone is building a business or hiding from the job market behind a Figma file and a domain name. The pattern: landing page but no users, 'refining the product' for four months without showing it to a stranger, six hours in the code editor and zero minutes talking to people who might pay, posting build-in-public updates to other builders who will never be customers. Building feels productive but if nobody is using it, you are not making progress, just staying busy to avoid the discomfort of rejection.

Harsh but mostly wrong. Founders avoiding jobs and founders building real businesses look identical for the first 6-12 months. The difference is whether they're accumulating customer intelligence or just accumulating code.

— MindVegetable989837 pts

The post misses a deeper understanding of the why. People try to build first because it's harder to sell something that doesn't exist, especially without connections or deep industry knowledge.

— Reasonable-Bear-978817 pts

You're a loser right until you succeed, then you're a visionary. But the OP is right about mistaking code editor time for productivity. The opportunity cost of 6 hours coding vs 5 hours coding + 1 hour reaching out to customers is a no-brainer.

— threwlifeawaylol11 pts

We built a 3,000-person SaaS community starting from a 16-person meetup in Zagreb

In November 2021, the author booked a table at a bar in Zagreb and invited every SaaS person he could find in Croatia. Sixteen people showed up. He went in expecting to generate leads for ChartMogul but instead made friends who are still his closest people four years later. Since then they have run 60+ events across 15 cities in Central and Eastern Europe and the community now has 3,000 members. Last year they held their first destination conference at a resort in Sibenik, Croatia with 300 attendees. The key lesson: keeping events deliberately rough and scrappy forces genuine conversation and weeds out conference-chasers.

The 'rough on purpose' bit is the secret sauce. When you start glossy, you attract content tourists. Building where there's a vacuum means you become the default watering hole and trust compounds fast.

— Much_Pomegranate38001 pts

I will always prefer this style of conference. I go there to mingle with people. I can watch a lecture online.

— varnajohn1 pts

Enterprise came early

An enterprise prospect came through a referral six months into building and the conversation moved so fast that by the time the founder knew what they were dealing with, they were mid-procurement with a company that expected infrastructure they did not have. They won the deal but spent the next three months answering questions a more prepared company would have handled in a week. The product was never the problem, which made the experience frustrating. Now enterprise is becoming a real part of their pipeline but the gaps that slowed them down on the first deal are still there, just less visible.

The things that slowed you down on the first deal are not going to disappear on their own. Block out a week before the next enterprise conversation and work through them properly.

— Ill-Bag482313 pts

Start building the enterprise infrastructure now before the next one forces you to. The gaps that slowed you down on the first deal are always the same ones that come up on the second.

— ExchangeOld75176 pts

Talk to whoever ran procurement on that deal and ask them directly what would have made it faster. Most procurement teams will tell you exactly what they need if you ask.

— Numerous_Revenue55853 pts

How do keywords actually help in SEO?

A beginner question that generated a useful practitioner thread. The consensus from experienced SEOs is that keywords still matter but not in the old 'exact match everywhere' way. They now function as signals of user intent rather than targets to stuff into content. The practical approach most described: pick one primary keyword, build around related terms and questions people actually search, and write naturally to cover the topic deeply. Multiple commenters confirmed that pages rank for hundreds of keyword variations just by answering the intent clearly without forcing phrases. Over-optimization shows up when writing feels forced.

Keywords are signals, not stuffing. I've had pages rank for tons of variations just by writing naturally and going deeper on the topic. If it reads like something you'd want to read, you're probably fine.

— crawlpatterns8 pts

Think of keywords as direction for the page, not something to chase line by line. Google understands context now. Build the page around what the person is actually trying to figure out.

— rohitkurup6 pts

Has anyone else had their residential proxies start failing on LinkedIn recently?

A practitioner reports that residential proxy setups that worked reliably for a year are now returning 70-80% failure rates on LinkedIn, with accounts getting flagged, captcha loops, and full restrictions starting around January 2026. The problem is IP pool saturation: the same residential IPs are being recycled across too many proxy providers. The only configuration holding up consistently is 4G/5G mobile proxies, where carrier IPs through CGNAT infrastructure are trusted because millions of real professionals use LinkedIn on their phones through the same networks. LinkedIn cannot flag carrier IPs without also flagging legitimate mobile users.

LinkedIn got tired of the proxy arms race and decided to nuke anything that isn't actual carrier traffic. Your failure rate is them saying 'we know what you are' without banning you. 4G works because you're hiding in plain sight.

— kubrador1 pts

LinkedIn's detection got significantly more aggressive around Q4. Switched to mobile on Proxyrack, carrier IPs through CGNAT are the only thing holding up reliably.

— Agreeable_Bat82761 pts

SEO Digest: Google may let publishers opt out of AI features in Search

A weekly SEO news roundup covering three significant developments. First, Google's Personal Intelligence feature is expanding beyond paid plans to free users in the US across AI Mode in Search, the Gemini app, and Gemini in Chrome. Second, Google says it is developing controls that would let publishers specifically opt out of generative AI features in Search. Third, Google is now rewriting AI-generated headlines that appear in Search results. The irony was not lost on commenters: Google is offering an opt-out while simultaneously making AI Overviews appear more often, which one user compared to a waiter asking if you want ketchup while already pouring it on your fries.

Google's basically saying 'yeah we'll let you opt out' while simultaneously making AI overviews appear more often. It's like a waiter asking if you want ketchup while pouring it on your fries.

— kubrador1 pts

UCP looks like preparation for us to stop going to store websites altogether. For big brands ok, for small ones - the death of recognition.

— Due-Bear-24883 pts

The fear of ranking penalties for opting out is very real. Most publishers will feel pressured to stay opted in just to avoid losing visibility.

— Chara_Laine1 pts

Surrounded, but Not Nourished

A Substack essay examining the paradox of modern attention through the lens of Byung-Chul Han's concept of hyperattention and Simone Weil's writings on genuine attention. The thesis is that boredom is not something to fix but something we need. We are surrounded by stimulation yet starved of the deep nourishment that comes from genuine engagement. The constant filling of every gap by technology has eliminated the practice of filling time yourself, and with it, the capacity for the kind of attention Weil described as the rarest and purest form of generosity. The most resonant comment came from someone who grew up in the countryside with no TV or computer, recalling that the boredom was actually training.

This took me back to childhood. No TV, no computer. Just long stretches of boredom. I was the master of filling that time with my own games and thoughts. That's what's gone. Not just the quiet but the practice of filling it yourself. The filling is done for you before you even notice the gap.

— Foreign-Barber323712 pts

Growing up dealing with boredom the way kids used to. These days I can't just sit, but at least I can walk local trails without headphones.

— GardenPeep2 pts

Would love to hear the thoughts of some serious reader on Consciousness

A link to a blog post titled 'Space, Time, and the Levels of Mind' exploring consciousness through a framework connecting mind to physical laws. The piece argues for a universal shared consciousness that transcends individual experience. Comments were sharply divided: one respondent engaged seriously, noting that while they understand the 'you are one with the universe' argument intellectually, daily experience contradicts it and the speed of causality remains an observed reality. Another dismissed the entire piece as 'pseudo-scientific woo with no basis in reality,' arguing that philosophy is about gaining accurate understanding, not fantasizing about ideas we have every reason to believe are untrue.

It boils down to 'you are one with the universe.' I understand that on some level, yet my daily experience is different. Every time humans talk about consciousness it feels like we are missing something big. Maybe it's simply out of our reach.

— Sitheral3 pts

Pseudo-scientific woo. There is no super conscious universal shared state, there is no 'soul.' Philosophy is about gaining accurate objective understanding, not fantasizing about ideas we have every reason to believe aren't true.

— Prowlthang2 pts

The Nasadiya Sukta (RigVeda 10.129): A Philosophical Exploration of Creation and Limits of Knowledge

A PhilPapers link to a scholarly analysis of the Nasadiya Sukta, one of the most philosophically rich hymns in the Rig Veda, dating to roughly 1500 BCE. The hymn famously questions the origin of creation with radical skepticism: 'There was neither death nor immortality then.' The text acknowledges that the origin of the universe is an impenetrable mystery and questions whether even the gods could know, since they came after creation. The academic paper examines the hymn's epistemological humility and its striking parallel with modern philosophical questions about the limits of knowledge. Comments were sparse but thoughtful, noting that understanding these limits is the beginning of true clarity.

There was neither death nor immortality then. The text acknowledges that the origin of the universe is an impenetrable mystery. Understanding these limits is the beginning of true clarity.

— Smile_Like_Arsenic1 pts

Apple Business

All-in-one business platform from Apple

Video.js v10 Beta

Rebuilt from scratch to be 88% smaller

Flighty Airports

Real-time airport intelligence from the Flighty team

DeepSeek Just Fixed One Of The Biggest Problems With AI

Two Minute Papers covers DeepSeek's Engram project, which addresses one of the core limitations of current AI systems. The video breaks down the research paper and explains why this approach to memory and context retention could meaningfully change how AI agents handle long-running tasks.