Wednesday, February 26, 2026

Artwork of the Day

The elders pressed their stamps in silence, gold on indigo, a language without sound— each glyph a door to what was known before the knowing had a name. Return, they say. The bird looks back. The future is behind us.

Faces of Grit

Captain Lakshmi Sahgal

The doctor who traded her stethoscope for a revolution

Anthropic refuses Pentagon demand to loosen military AI restrictions, faces Defense Production Act threat

Anthropic is holding firm against Pentagon pressure to remove safety guardrails that prevent its AI from being used for autonomous weapon control and domestic surveillance. At a meeting between CEO Dario Amodei and Defense Secretary Pete Hegseth, Hegseth delivered an ultimatum: comply by Friday or face the Defense Production Act, a law that can compel companies to cooperate with government orders. Legal experts say invoking the Act against an AI company would be unprecedented and could trigger a wave of lawsuits. Amodei has argued that existing safeguards do not interfere with legitimate defense applications. The standoff has become a defining moment for the AI industry's relationship with the military.

Nvidia posts another record quarter as AI capex spending goes exponential

Nvidia reported yet another record-breaking quarter, driven by insatiable demand for AI training and inference chips. CEO Jensen Huang declared that 'the demand for tokens in the world has gone completely exponential,' reflecting the scale of capital expenditure by hyperscalers building out AI infrastructure. The results come amid broader questions about whether the current pace of AI investment is sustainable or approaching bubble territory. Nvidia's data center revenue continues to dwarf all other segments. The company's market dominance shows no signs of weakening as competitors struggle to deliver competitive alternatives at scale.

Anthropic acquires computer-use AI startup Vercept after Meta poaches co-founder

Anthropic has acquired Seattle-based Vercept, a startup that built complex agentic tools including a computer-use agent capable of completing tasks inside applications like a person sitting at a laptop. The acquisition comes after Meta reportedly poached one of Vercept's co-founders, highlighting the intense talent war in the AI agent space. The deal strengthens Anthropic's push into autonomous computer-use capabilities, an area where Claude has been steadily gaining ground. Financial terms were not disclosed.

Judge dismisses xAI's trade secrets lawsuit against OpenAI for lack of evidence

A federal judge has tossed Elon Musk's lawsuit accusing OpenAI of poaching eight xAI employees to steal trade secrets related to its data centers and Grok chatbot. US District Judge Rita Lin ruled that xAI failed to provide any evidence of actual misconduct by OpenAI, noting that xAI appeared fixated on former employees' conduct rather than demonstrating that any stolen secrets were actually used. The ruling undercuts a key legal strategy in the ongoing rivalry between Musk's xAI and OpenAI. The judge found that even reading an ex-employee's text messages in the most favorable light possible for xAI still failed to support the claims.

Perplexity launches Computer, bundling rival AI models into one agentic workflow system for $200 per month

Perplexity has launched a new product called Perplexity Computer, a browser-based interface that orchestrates multiple AI models from different providers into unified agentic workflows. Users describe the outcome they want and the system spins up sub-agents for web research, document creation, data processing, and API calls. It currently runs Opus 4.6 as its core model, supplemented by Gemini, Grok, ChatGPT 5.2, and specialized models for image and video generation. The product is available as part of the Max plan at $200 per month, positioning Perplexity as a meta-orchestration layer sitting atop the entire AI model ecosystem.

Samsung unveils Galaxy S26 lineup with 'agentic AI' features and higher prices

Samsung has announced its Galaxy S26, S26 Plus, and S26 Ultra, all powered by Qualcomm's Snapdragon 8 Elite Gen 5 chip. Samsung is calling them the first 'agentic AI phones,' with features including AI-powered call screening, photo editing via text prompts, and a Google Gemini integration that can carry out tasks in third-party apps like Uber and DoorDash on your behalf. Despite limited hardware upgrades and a regression from titanium to aluminum on the Ultra, the two cheaper models have each increased in price by $100. The Ultra holds at $1,300.

US cybersecurity agency CISA reportedly in crisis amid Trump administration cuts

Under the first year of the Trump administration, CISA has faced severe cuts, layoffs, and furloughs that bipartisan lawmakers and cybersecurity industry sources say have left the agency unprepared to handle a major crisis. The gutting of America's primary cyber defense agency comes at a time when ransomware attacks and nation-state cyber operations are intensifying. The report raises serious questions about the country's ability to respond to large-scale cyberattacks on critical infrastructure.

Why 2026 is the year for sodium-ion batteries

Sodium-ion batteries are finally making the leap from lab curiosity to commercial product, landing on MIT Technology Review's 10 Breakthrough Technologies of 2026 list. Unlike lithium-ion cells, sodium-ion batteries use abundant, cheap materials that do not require mining in geopolitically sensitive regions. The technology is now being deployed in electric vehicles and grid-scale energy storage arrays. Chinese manufacturers are leading commercialization, with HiNa Battery Technology pushing salt-based cells toward mass production. The batteries trade some energy density for dramatically lower cost and improved safety characteristics, making them ideal for stationary storage and urban EVs where range anxiety is less of a concern.

Open source's new dilemma: when your test suite is enough to rebuild your product

Tldraw, the collaborative drawing library, is moving its test suite to a private repository after it became apparent that a comprehensive test suite is enough for AI coding agents to rebuild an entire library from scratch, potentially in a different language. The move was reportedly prompted by Cloudflare's project to port Next.js to use Vite in a week using AI. This raises uncomfortable questions for every open source project with a commercial business model: the very artifacts that prove your code works also provide a complete specification for replacing it. Tldraw filed a joke issue to 'translate source code to Traditional Chinese' to defend against AI replication, highlighting the absurdity of the situation. As AI coding tools improve, the tension between open source transparency and commercial viability will only grow.

How will OpenAI compete?

Benedict Evans examines OpenAI's competitive position as the AI landscape shifts from a model-quality moat to an execution and distribution game. With Anthropic, Google, Meta, and a growing field of open-weight alternatives all converging on similar capability levels, Evans argues that OpenAI's real challenge is no longer being the smartest model in the room but figuring out what kind of company it wants to be. The analysis explores whether OpenAI can sustain its consumer brand advantage, build defensible enterprise products, or whether it risks becoming the Netscape of AI — the company that defined the category but lost to better-resourced competitors.

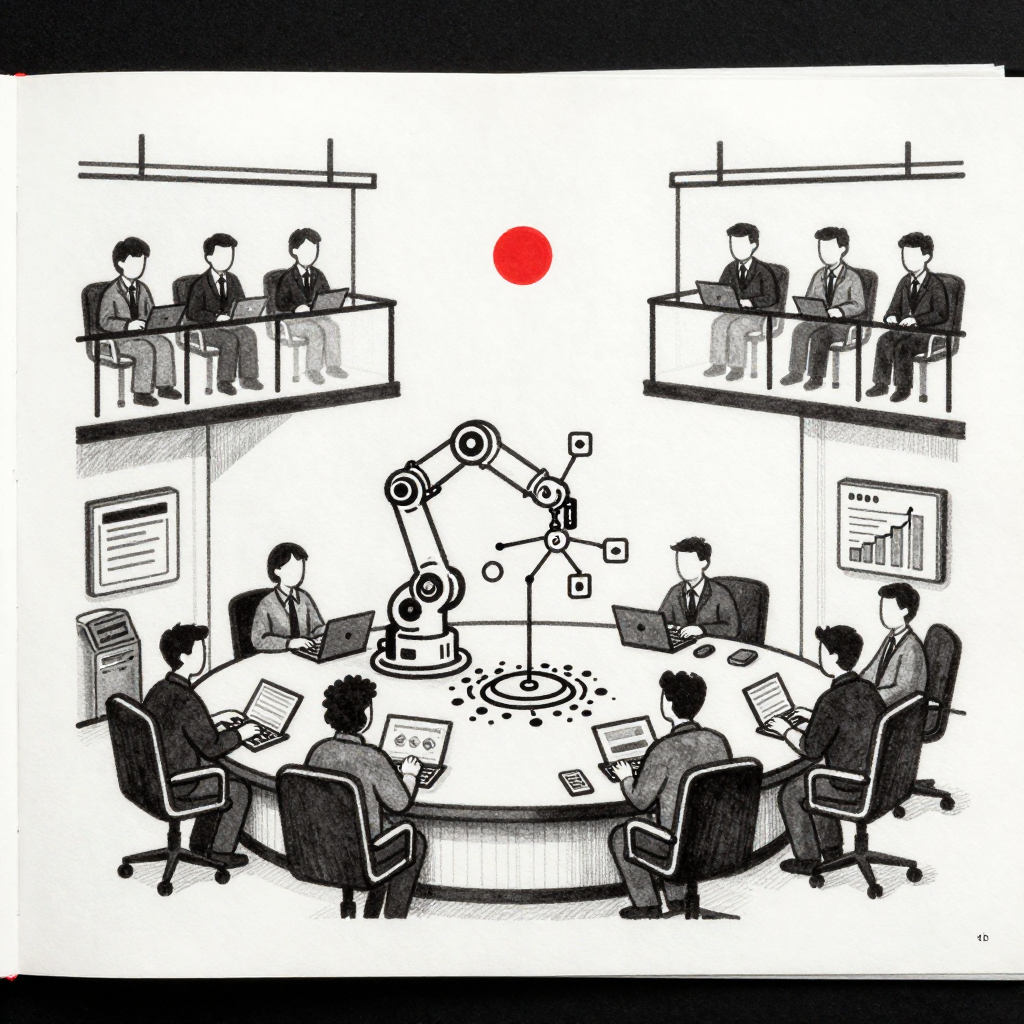

We built an AI agent for our operations team - 6 months later here's what actually happened

A team shares an unusually honest post-mortem of deploying AI agents for internal operations. Their ops team was spending roughly 60% of its time on tasks that followed predictable decision trees — smart people doing robotic work. They partnered with an AI agent development company rather than building entirely in-house, starting with rule-heavy workflows before gradually increasing autonomy. The post details what worked, what broke, and the unexpected second-order effects that nobody predicted during planning.

Starting with a rule-heavy workflow was probably the biggest win here. Curious how you are handling evaluation now that autonomy has increased — periodic audits or tracking quality thresholds to catch drift over time?

— latent_signalcraft9 pts

What model are you using? Are you also using something like OpenClaw? Where are you hosting it, and what is the total cost including models, hosting, plus integrated services?

— OrganizationWinter993 pts

I made MCPs 94% cheaper by generating CLIs from MCP servers

Every AI agent using the Model Context Protocol is quietly overpaying — not on API calls, but on the instruction manual. Before an agent can do anything useful, MCP dumps the entire tool catalog into the conversation as JSON Schema. With a typical setup of 6 MCP servers and 84 tools, that is roughly 15,500 tokens before a single tool is called. The author built a system that generates lightweight CLIs from MCP servers, using lazy loading to reduce session start cost to around 300 tokens — a 98% reduction. Over 100 tool calls, the savings still hold at 94%.

Maybe call it RAMCP? Retrieval Augmented Model Context Provider. CLI is kind of a generic term and doesn't fit what you are doing.

— Crafty_Disk_702610 pts

I get 404 on the GitHub link. Did something break?

— Final_Alps3 pts

I want to learn agentic AI from scratch — where do I actually start?

A data scientist with a coding background asks how to break into agentic AI without spending lakhs on courses. The thread becomes a useful resource guide, with experienced practitioners arguing that the real bottleneck is not theory or frameworks but the gap between reading about agents and forcing yourself to build broken prototypes that do one tiny thing. The consensus: pick your most annoying repetitive workflow, build a 200-line agent prototype, and iterate from there.

Start by picking one concrete workflow from your data science background where you manually repeat a few steps. Strip it down to a minimal agent that calls an LLM once, uses a tool, or returns a fixed result. Iteratively add memory, planning, or multi-step logic instead of trying to learn everything at once.

— One_Philosophy_184715 pts

No one is making money except Nvidia. Agentic AI requires basic understanding of problem solving, while loops, and adding print statements to understand the LLM call flow.

— Acrobatic-Aerie-446810 pts

Gave Claude Code the ability to produce beautiful diagrams — now I focus more on architecture

The top post of the day showcases a developer who integrated interactive diagram generation into Claude Code, moving beyond basic Mermaid markdown to produce rich visual representations of system architecture. The approach lets the model see system structure visually, which commenters argue leads to better decisions about where changes should go instead of pattern-matching against the nearest file. The post includes a video demonstration of the workflow in action.

Architecture-first is the right approach with agentic coding. When the model can see the system structure visually, it makes better decisions about where changes should go instead of just pattern-matching against the nearest file.

— dee-jay-300031 pts

You can already get basic diagrams with md files and Mermaid, but this is pretty cool and interactive.

— fujimonster20 pts

Massive degradation in quality or API responsiveness this morning

A widespread outage hit Claude Code users on the Max 20x plan, with instances unable to produce more than a few lines of output before completely freezing. Users reported consistent 500 errors across different terminal windows and request complexities. With 140 comments, this became the most active discussion of the day, as frustrated paying users shared their experiences. The thread captures the tension between Anthropic's premium pricing and service reliability.

The Hegseth Effect.

— Forward-Ad-811640 pts

Same, and it seems to be elevating.

— ufii431 pts

Same here under the Max 20x as well. Unusable.

— Large_Diver_415124 pts

If you aren't creating skills for your own project, start now

A developer shares how building a library of custom Claude Code skills — specialized tools for unit testing, markdown generation, workflow development, brainstorming, session journaling, and task management — has dramatically improved output quality. The key insight: skills function like a precise employee training manual, reducing guesswork and eliminating the need to re-explain patterns. They also implemented a local caching system for web requests that prevents redundant API calls and saves tokens. The thread spawned creative responses, including one user who built a self-evolving skill system that automatically creates new skills when it detects repeated patterns.

With the power of making ideas reality, this is exactly what you should do. Hooks, pre and post. You can also invoke and run daemons that can start Claude instances for periodic repo updates.

— SteiniOFSI18 pts

I created a skill called 'evolve-yourself' that stores a JSON database of common patterns with creation and last-used dates. It automatically creates new skills based on frequency — if a pattern appears 3+ times in one day, it generates a skill immediately.

— Fluent_Press205013 pts

Product does $15K MRR. Got a $2M acquisition offer. Still thinking about why I said no.

The most-discussed SaaS post of the day. A founder received a serious $2M acquisition offer for a product doing $15K MRR — roughly an 11x multiple on annual revenue. They spent three weeks building spreadsheets, talking to their spouse, and losing sleep before declining. The financial argument for taking it was strong: $2M is life-changing money, the product could plateau, competition could intensify. The argument for keeping it was partly financial and partly emotional: $15K MRR with room to grow means long-term value could exceed the offer. The comments were sharply divided.

I turned down $7M for one of several projects. Changes in AI have resulted in a current valuation of $3M. Things can go both ways. The future is not just a continuation of the past.

— CodSpiritual861889 pts

It was an insanely bad decision, assuming it was real.

— 0xFatWhiteMan65 pts

Investor told me my business 'isn't venture scale.' He was right and I don't care.

A bootstrapped founder took a meeting with a VC out of curiosity. The investor liked the product, liked the market, understood the problem — then said the inevitable words every bootstrapped founder hears: 'This is a great business but it is not venture scale.' Translation: you are not going to become a billion-dollar company and we cannot invest in something that might only become a $10M or $20M business. The founder's response: that is exactly the point. The thread became a thoughtful discussion about the structural mismatch between venture capital fund economics and solidly profitable businesses.

VC-scale is about fund economics, not business quality. Profitability, control, and sustainability are undervalued because they are quieter outcomes.

— Anantha_datta13 pts

A $200M fund needs to return $600M+ to LPs, so each portfolio company needs a plausible path to a $500M+ exit. A company doing $5M ARR at 30% margins is phenomenal for founders but literally cannot generate the multiple a fund needs.

— dee-jay-30005 pts

How do you actually grow your early SaaS business without paying $500+ on ads?

An 18-year-old first-time founder asks the evergreen question of early-stage growth without ad spend. The thread delivered practical advice: direct outreach and communities beat blogs and videos at the earliest stages. Content works but it is a long game. The consensus was clear — distribution in the early days is manual, not scalable, and that is normal. Multiple founders shared that their first 10 customers came from finding communities where potential users already gathered and solving problems visibly.

Don't start with ads, they usually just burn money before you understand your user. My first customers came from direct outreach and communities. Distribution early on is manual, not scalable. That is normal.

— Anantha_datta16 pts

Ads are an amplifier, not a creator of product-market fit. Leverage intent-based outreach: scrape high-intent keywords in niche communities, pitch your tool as a direct solution.

— AwayVermicelli39464 pts

What's your most underrated SEO tip that actually brought real results?

The top marketing post of the day asked for underrated SEO tips that actually moved the needle. The highest-voted practical advice: improving internal anchor text and content context across existing pages. It sounds small, but ensuring links point to relevant pages with descriptive anchors can boost rankings across multiple pages without creating new content. The caveat from experienced practitioners: anchor tweaks only help when the destination page actually deserves the link — fix weak page structure first, then optimize the links pointing to it.

Improving internal anchor text and content context. Go through your existing content and make sure links point to relevant pages with descriptive anchors — you can boost rankings across multiple pages without adding new content.

— ResponsiblePanda11409 pts

Anchor tweaks help, but only when the destination page actually deserves the link. I wasted time optimizing anchors to pages with weak structure. Once I improved the target page layout, the same internal links suddenly started passing value.

— UkraineWorldlove2 pts

Is organic marketing the worst decision for a startup?

A founder observes that every service startup they know is grinding on organic LinkedIn content — posting daily, behind-the-scenes, lessons learned — and getting 10 to 20 likes, mostly from coworkers. Meanwhile, companies running ads and retargeting are the ones showing up everywhere and grabbing clients. The thread produced a sharp insight: organic is not the problem, expecting organic to bring clients fast is. Ads and outbound create demand. Organic captures and compounds it later. Without distribution, organic content is just journaling in public.

Organic is not the problem. Expecting organic to bring clients fast is. Ads and outbound create demand. Organic captures and compounds it later. Organic without distribution is just journaling in public.

— hellorenn26 pts

If you need clients now, your best plan is outbound — cold emails, cold calls. But still work on inbound for the long-term growth.

— Smart-Total-70994 pts

Boutique startup: should I invest in paid ads or focus on SEO?

A new boutique startup founder with a limited budget asks the classic paid-versus-organic question. The thread produced a nuanced answer: it is not either-or. Use small-budget ads to test which products convert, simultaneously build SEO foundations with optimized product pages and local SEO, and invest heavily in branding and trust through Instagram, user-generated content, and reviews. The key insight: in the early stages, clarity of positioning matters more than the choice between ads and SEO. If people do not clearly understand why your boutique is different, neither channel will work well.

Use small-budget ads to test what products convert. Simultaneously build SEO foundations. In early stages, clarity of positioning matters more than ads vs SEO. If people don't understand why your boutique is different, neither will work.

— Akshaya_Wibits7 pts

Paid gives immediate traffic. SEO will take almost a year to give you a return.

— getblackbox_io3 pts

Reality is not beyond our rational reach — subjectivity is how reality becomes knowable

An article from the Institute of Art and Ideas argues that our subjective perspective does not cut us off from reality but is the very mechanism through which reality becomes knowable. Objectivity, the author claims, arises historically and biologically through the evolution of life, culture, and especially language. The piece challenges the Kantian tradition that posits an unknowable thing-in-itself beyond our perceptions. The comments pushed back substantively, with critics noting the argument smuggles in quasi-Aristotelian teleology and fails to address Wittgensteinian objections that such conceptions are language games rather than deep ontology.

This smuggles in quasi-Aristotelian teleology. The bird does not have wings 'so it can fly' — that is a pre-Darwinian anthropomorphization of natural selection across deep time. It also lacks a good response to the Wittgensteinian accusation that such conceptions are language games, not deep ontology.

— 2SP00KY4ME24 pts

The author conflates subjective reality of the self with some kind of quasi-objective reality. Objectivity cannot be captured by the observation that things change in patterns and rational beings perceive these changes. We can reference objective reality as rational agents, but we cannot actually know if our guesses are True.

— whiterook1315 pts

Product Hunt data unavailable

Product Hunt blocked automated access today

Adobe and NVIDIA's New Tech Shouldn't Be Real Time. But It Is.

A breakdown of a new real-time rendering technique jointly developed by Adobe and NVIDIA that achieves glinty, physically accurate material rendering at interactive framerates. The paper demonstrates specular microstructure rendering — the kind of shimmering detail you see on brushed metal or car paint — running in real time, which was previously considered too computationally expensive for anything outside offline rendering.

Gemini 3.1 Pro and the Downfall of Benchmarks: Welcome to the Vibe Era of AI

A deep analysis of Google's Gemini 3.1 Pro release and what it reveals about the declining usefulness of AI benchmarks. The video examines a new SimpleBench record, hallucination rates, and the growing gap between benchmark performance and real-world capability. Features analysis from seven papers and posts, including Anthropic's research on measuring agent autonomy and Francois Chollet's take on agentic coding versus machine learning.